This guy is very very scared of Deepseek and all the potential malicious things it will do, seemingly due to the fact that it’s Chinese. As soon as the comments point out that ChatGPT is probably worse, he disagrees with no reasoning.

Transcription:

DeepSeek as a Trojan Horse Threat.

DeepSeek, a Chinese-developed Al model, is rapidly being installed into productive software systems worldwide. Its capabilities are impressive-hyper-advanced data analysis, seamless integration, and an almost laughably low price. But here’s the problem: nothing this cheap comes without a hidden agenda.

What’s the real cost of DeepSeek?

-

Suspiciously Cheap Advanced models like DeepSeek aren’t “side projects.” They take massive investments, resources, and expertise to develop. If it’s being offered at a fraction of its value, ask yourself-who’s really paying for it?

-

Backdoors Everywhere DeepSeek’s origin raises alarm bells. The more systems it infiltrates, the more it becomes a potential vector for mass compromise. Think backdoors, data exfiltration, and remote access at scale-hidden vulnerabilities deliberately built in.

-

Wide Adoption = Global Risk From finance to healthcare, DeepSeek is being installed across critical systems at an alarming rate. If adoption continues unchecked, 80% of our systems could soon be compromised.

-

The Trojan Horse Effect DeepSeek is a textbook example of a Trojan horse strategy: lure organizations with a cheap, powerful tool, infiltrate their systems, and quietly map or control them. Once embedded, reversing the damage will be nearly impossible.

The Fairytale lsn’t Real

The story of DeepSeek being a “low-cost, side project” is just that-a fairytale. Technology like this isn’t developed without strategic motives. In the world of cyber warfare, cheap tools often come at the highest cost.

What Can We Do?

Audit your systems: Is DeepSeek already embedded in your critical infrastructure?

Ask the hard questions: Why is this so cheap? Where’s the transparency?

Take immediate action: Limit adoption before it’s too late. The price may look attractive, but the real cost could be our collective security.

Don’t fall for the fairytale.

This supposed Chief Technology Officer appears to understand very little about how Technology actually works.

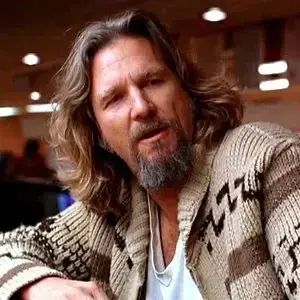

Someone is afraid of getting asked why they wasted so much money on AI.

“It is difficult to get a man to understand something, when his salary depends upon his not understanding it!”

The CTO at my last job was pulling down millions a year despite barely knowing anything about anything, and being little more than a glorified bully. He got there via project management, after all.

Not that these concerns aren’t real necessarily but they certainly aren’t unique to DeepSeek. The real win is in propaganda, if you have a very capable and cheap model you can get everyone using you can push the party lines in sensitive issues much more effectively beyond your borders. I don’t think DeepSeek is a good tool for that yet because it just refuses to discuss those issues but I wouldn’t be surprised if that is the direction they take with future models.

Still, the fact that it’s heavily censored when it comes to sensitive CCP issues makes it a no from me.

ChatGPT and CoPilot have the same concerns or worse for me.

I disagree strongly, ChatGPT will gladly tell you all about the My Lai Massacre for example. Not to say it’s perfect or completely uncensored but to say it’s worse or the same…I just can’t get there.

It’s not about the censorship for me (though I recognize that was your main point, I should have made a top level comment), it’s about the infiltration of it within our computers, tech and our daily lives, to which we become dependent on it. I’m worried that at any moment, the controlling entity could change it on a whim, publicly or covertly.

I see what you mean. I agree with that and in that sense DeepSeek is actually a really good thing because it gives some hope that you don’t need insane amounts of money for a powerful model. Let’s hope access and development doesn’t get too concentrated.

Openai captures everything you feed it while the other orgs that concern you provide models that can be run locally without an internet connection. I can look tianamen square up on wikipedia, but none of us can control what openai does with our data.

From what others have said on DeepSeek, you can run the AI model on your own hardware and the censoring is done on after the AI outputs its response to the internal servers.

Lol China is shit but not everything from China is a Communist party psyop

Also you can run it locally which eliminates all concerns.

Not all, they likely still embed some pro-CCP nonsense in the model. It’s unlikely to be a security issue to your machine, but it could alter public perception, which could be in China’s interests.

Whether that’s an actual problem that needs action is another issue. I don’t know about you, but my intended use-cases have very little risk of indoctrination (e.g. code analysis and generation).

Ah yeah that’s true. I’m not really knowledgable in AI training but can’t you use the deepseek r1 model as a base training model and overwrite it with more international data (like adding some tianmen square knowledge to it and producing actual facts)

I’m not super knowledgeable either, so I don’t know if models can easily be extended like that. But you can always sample from multiple models.

If it had been embedded with pro CCP it would not have censorship in place to stop trigger words (lol)

It’s critical of china in every way except those specific events, if it had been trained to throw pro-CCP material it wouldn’t lock up but instead argue with you, for example, uyghur genocide having no concrete proof orrrr that there are studies showing that it is just detention camps for terrorists orrrr that tianenmen was started by the students and escalated into a tragedy orrrr that the tank man was just an act man an individual trying to speak with the officers rather than heroism

But it doesn’t do that and instead focused on censorship

Maybe they’ll add that to the next gen. Or maybe not, I guess we’ll see.

“Suspiciously cheap”

Tell that to every writer who took decades honing their craft, just for western AI to come in and hoover it all up and sell it in a monthly subscription.

Fucking jackass.

western

And what exactly does eastern do?

I think they hoovered up the stuff the western one already hoovered up, for bonus funny points.

“Thieves mad their stuff gets stolen!”

ROFL He’s sweating so much because DeepSeek is proving their little money making scam shouldn’t be as expensive and resource intensive as it is! So he’s out here trying to shame DeepSeek, which will make investors ask a lot of hard questions and retract funding for their AI Lie. If DeepSeek could burst the bubble of American Made LLM Models, I’d be tickled pink. I’d naturally never use it as LLMs are really only good for spellchecking and grammar in my opinion (never should’ve strayed further than that without proper research and developing a true code of ethics that wouldn’t be overstepped constantly).

I love how much this C-Suite shitbag is maulding at the moment!

nah LLMs have uses. as a chef I can plug in ingredients and it will generate me good combinations that can help inspire. for d&d it can help stitch a few spaces I didn’t think of. It’s good as a sounding board for my creativity

Can’t you run it locally because it’s open source? Like yeah, don’t implement software running on servers you don’t control, duh! Same thing is true with ChatGPT, with the exception that you cannot run that yourself, so Deepseek is actually safer for companies. All these products that just send requests to Open AI servers are stupid anyways. They are working closely with a fascist government now, you really want your personal data to go through them?

It is not open source. It just has its weights published. Both the source code and the training data are as closed source as OpenAI’s.

Yes, you can run it locally. I just got done setting up a version to run on my local machine, and I’m running a single 3090.

If it comes from China, I don’t trust it.

You shouldn’t really be trusting OpenAI any more, though.

Who said I use ANY Abominable Intelligence?

Neither do I, but I’ll still use it if it saves me time even with verifying its results.

deleted by creator

So, basically the old anti-Linux FUD with some anti-China sentiment sprinkled on top?

That’s stupid. I’m anti-anything-not-FOSS because I am not going to trust anyone I don’t know.

Same note, I am not going to trust any AI. Ever. I don’t give a flying anything about anything to trust anyone or thing I don’t know. That’s stupid.

My theory on how Deepseek managed to beat out ChatGPT: All LLMs do is that they try to guess what the next token is, based on statistics. Making the statistics more precise requires exponentially more training data and weights. You can see that if you compare model sizes, they tend to double for the next level of model.

So, as a consequence, you can build a model half as big, and only sacrifice a fraction of the performance.

deleted by creator