I have been seeding public torrent trackers for a few years and recently learned about Usenet.

I figured I’de take this shot in the dark and see if I can get a peek behind the curtain into the world of private trackers and maybe meet some like minded folk.

I have a NAS that i use as a 24\7 seedbox and will redownload media I already have just to raise my all time upload and ratio, i like to treat it like a high score 😄

Despite Torrents benefiting from decentralized file distribution, its efficacy remains governed by seeding availability. Usenet, with centralized storage, boasts impressive retention periods, often spanning several years. This is why it does not require seeding unlike torrents.

Thanks for the explanation, now it’s clicking in my brain why all of the Usenet trackers I hear about are all private… They likely wouldn’t be around very long if they were distributing linux iso’s from their own servers to the general public.

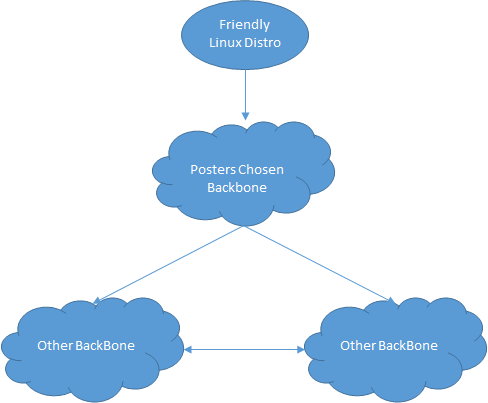

Usenet (or nntp) relies on physical servers at set locations around the world, these normally reside in large datacenters. these physical servers are owned by or maintained by the “backbones” so for example, Giganews owns and maintains its own servers, and sells access to them as either giganews, or supernews.com. They also sell access to 3rd party resellers who brand the access as their own and sell it onto you giganews resellers are RhinoNewsgroups, Usenet.net, Powerusenet - these may use their own cache for recent content, but once past that they all share the same content.

When a “linux distro” is uploaded to Usenet, it is uploaded to one of these physical servers, either directly through a provider like giganews, or through a reseller, this doesn’t matter as these physical servers are all connected via certain propagation agreements with all of the other backbones and caches be it xsnews, searchtech limited, this process is called propagation, and it can take up to 40 minutes and even longer for these physical servers to become synced.

Here’s a little diagram:

This is as you can see a cyclical process - the posters chosen provider gets the article, and then other servers go “oh what do you have that we dont? lets grab that” this process occurs until the servers are >=99% complete, when a poster uploads they normally upload their release with par files to account for 10% of the overall file - so if 5% of the release is damaged due to poor transfer or such - your download client can grab these par files and fix the missing sections - kinda cool!

Wow, thanks for taking the time to type that explanation! Your response was much easier to understand vs what i got trying to google.

It’s clear now that a private torrent tracker is more in line with what I originally wanted, but I’ll hang around the Usenet community to try and learn more as it seems really cool. I kinda wish i found out about it 20 years ago but it’s never too late to learn!