I’m a retired Unix admin. It was my job from the early '90s until the mid '10s. I’ve kept somewhat current ever since by running various machines at home. So far I’ve managed to avoid using Docker at home even though I have a decent understanding of how it works - I stopped being a sysadmin in the mid '10s, I still worked for a technology company and did plenty of “interesting” reading and training.

It seems that more and more stuff that I want to run at home is being delivered as Docker-first and I have to really go out of my way to find a non-Docker install.

I’m thinking it’s no longer a fad and I should invest some time getting comfortable with it?

Don’t learn Docker, learn containers. Docker is merely one of the first runtimes, and a rather shit one at that (it’s a bunch of half-baked projects - container signing as one major example).

Learn Kubernetes, k3s is probably a good place to start. Docker-compose is simply a proprietary and poorly designed version of it. If you know Kubernetes, you’ll quickly be able to pick up docker-compose if you ever need to.

You can use

buildah bud(part of the Podman ecosystem) to build containerfiles (exactly the same thing as dockerfiles without the trademark). Buildah can also be used without containerfiles (your containerfiles simply becomes a script in the language of your choice - e.g. bash), which is far more versatile. Speaking of Podman, if you want to keep things really simple you can manually create a bunch of containers in a pod and then ask Podman to create a set of systemd units for you. Podman supports nearly all of what docker does (with exception to docker’s bjorked signing) and has identical command line syntax. Podman can also host a docker-compatible socket if you need to use it with something that really wants docker.I’m personally a big fan of Podman, but I’m also a fan of anything that isn’t Docker: LXD is another popular runtime, and containerd is (IIRC) the runtime underpinning docker. There’s also firecracker or kubevirt, which go full circle and let you manage tiny VMs like containers.

All that makes sense - except that I’m taking about 1or 2 physical servers at home and my only real motivation for looking into containers at all is that some software I’ve wanted to install recently has shipped as docker compose scripts. If I’m going to ignore their packaging anyway, and massage them into some other container management system, I would be happier just running them of bare metal like I’ve done with everything else forever.

Acronyms, initialisms, abbreviations, contractions, and other phrases which expand to something larger, that I’ve seen in this thread:

Fewer Letters More Letters DNS Domain Name Service/System Git Popular version control system, primarily for code HTTP Hypertext Transfer Protocol, the Web IP Internet Protocol LXC Linux Containers NAS Network-Attached Storage PIA Private Internet Access brand of VPN Plex Brand of media server package RAID Redundant Array of Independent Disks for mass storage SMTP Simple Mail Transfer Protocol SSD Solid State Drive mass storage SSH Secure Shell for remote terminal access SSL Secure Sockets Layer, for transparent encryption VPN Virtual Private Network VPS Virtual Private Server (opposed to shared hosting) k8s Kubernetes container management package nginx Popular HTTP server

15 acronyms in this thread; the most compressed thread commented on today has 10 acronyms.

[Thread #349 for this sub, first seen 13th Dec 2023, 17:15] [FAQ] [Full list] [Contact] [Source code]

dude, im kinda you. i just jumped into docker over the summer… feel stupid not doing it sooner. there is just so much pre-created content, tutorials, you name it. its very mature.

i spent a weekend containering all my home services… totally worth it and easy as pi[hole] in a container!.

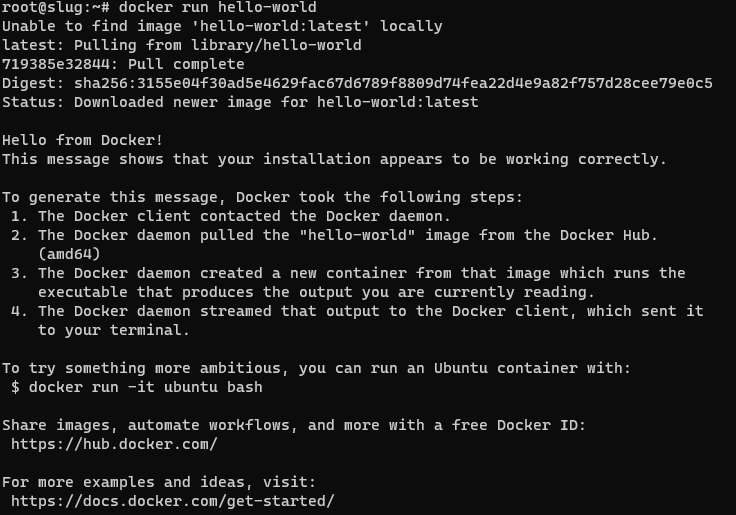

Well, that wasn’t a huge investment :-) I’m in…

I understand I’ve got LOTS to learn. I think I’ll start by installing something new that I’m looking at with docker and get comfortable with something my users (family…) are not yet relying on.

Forget docker run,

docker compose up -dis the command you need on a server. Get familiar with a UI, it makes your life much easier at the beginning: portainer or yacht in the browser, lazy-docker in the terminal.dockge is amazing for people that see the value in a gui but want it to stay the hell out of the way. https://github.com/louislam/dockge lets you use compose without trapping your stuff in stacks like portainer does. You decide you don’t like dockge, you just go back to cli and do your docker compose up -d --force-recreate .

# docker compose up -d no configuration file provided: not foundyou need to create a docker-compose.yml file. I tend to put everything in one dir per container so I just have to move the dir around somewhere else if I want to move that container to a different machine. Here’s an example I use for picard with examples of nfs mounts and local bind mounts with relative paths to the directory the docker-compose.yml is in. you basically just put this in a directory, create the local bind mount dirs in that same directory and adjust YOURPASS and the mounts/nfs shares and it will keep working everywhere you move the directory as long as it has docker and an available package in the architecture of the system.

`version: ‘3’ services: picard: image: mikenye/picard:latest container_name: picard environment: KEEP_APP_RUNNING: 1 VNC_PASSWORD: YOURPASS GROUP_ID: 100 USER_ID: 1000 TZ: “UTC” ports: - “5810:5800” volumes: - ./picard:/config:rw - dlbooks:/downloads:rw - cleanedaudiobooks:/cleaned:rw restart: always volumes: dlbooks: driver_opts: type: “nfs” o: “addr=NFSSERVERIP,nolock,soft” device: “:NFSPATH”

cleanedaudiobooks: driver_opts: type: “nfs” o: “addr=NFSSERVERIP,nolock,soft” device: “:OTHER NFSPATH” `

It just making things easier and cleaner. When you remove a container, you know there is no leftover except mounted volumes. I like it.

It’s also way easier if you need to migrate to another machine for any reason.

It’s basically a vm without the drawbacks of a vm, why would you not? It’s hecking awesome

Why wouldn’t you want to use containers? I’m curious. What do you use now? Ansible? Puppet? Chef?

Not OP, but, seriously asking, why should I? I usually still use VMs for every app i need. Much more work I assume, but besides saving time (and some overhead and mayve performance) what would I gain from docker or other containers?

Saves time, minimal compatibility, portability and you can update with 2 commands There’s really no reason not to use docker

But I can’t really tinker IN the docker-image, right? It’s maintained elsewhere and I just get what i got. But with way less tinkering? Do I have control over the amount/percentage of resources a container uses? And could I just freeze a container, move it to another physical server and continue it there? So it would be worth the time to learn everything about docker for my “just” 10 VMs to replace in the long run?

You can tinker in the image in a variety of ways, but make sure to preserve your state outside the container in some way:

- Extend the image you want to use with a custom Dockerfile

- Execute an interactive shell session, for example

docker exec -it containerName /bin/bash - Replace or expose filesystem resources using host or volume mounts.

Yes, you can set a variety of resources constraints, including but not limited to processor and memory utilization.

There’s no reason to “freeze” a container, but if your state is in a host or volume mount, destroy the container, migrate your data, and resume it with a run command or docker-compose file. Different terminology and concept, but same result.

It may be worth it if you want to free up overhead used by virtual machines on your host, store your state more centrally, and/or represent your infrastructure as a docker-compose file or set of docker-compose files.

Hm. That doesn’t really sound bad. Thanks man, I guess I will take some time to read into it. Currently on proxmox, but AFAIK it does containers too.

It’s really not! I migrated rapidly from orchestrating services with Vagrant and virtual machines to Docker just because of how much more efficient it is.

Granted, it’s a different tool to learn and takes time, but I feel like the tradeoff was well worth it in my case.

I also further orchestrate my containers using Ansible, but that’s not entirely necessary for everyone.

I only use like 10 VMs, guess there’s no need for overkill with additional stuff. Though I’d like a gui, there probably is one for docker? Once tested a complete os with docker (forgot the name) but it seemed very unfriendly and ovey convoluted.

Welcome to the party 😀

If you want a good video tutorial that explains the inner workings of docker so you understand what’s going on beneath the surface(without drowning in the details), let me know and I’ll paste it tomorrow. Writing from bed atm 😴

I’m also interested in that, please

I would absolutely look into it. Many years ago when Docker emerged, I did not understand it and called it “Hipster shit”. But also a lot of people around me who used Docker at that time did not understand it either. Some lost data, some had servicec that stopped working and they had no idea how to fix it.

Years passed and Containers stayed, so I started to have a closer look at it, tried to understand it. Understand what you can do with it and what you can not. As others here said, I also had to learn how to troubleshoot, because stuff now runs inside a container and you don´t just copy a new binary or library into a container to try to fix something.

Today, my homelab runs 50 Containers and I am not looking back. When I rebuild my Homelab this year, I went full Docker. The most important reason for me was: Every application I run dockerized is predictable and isolated from the others (from the binary side, network side is another story). The issues I had earlier with my Homelab when running everything directly in the Box in Linux is having problems when let´s say one application needs PHP 8.x and another, older one still only runs with PHP 7.x. Or multiple applications have a dependency of a specific library when after updating it, one app works, the other doesn´t anymore because it would need an update too. Running an apt upgrade was always a very exciting moment… and not in a good way. With Docker I do not have these problems. I can update each container on its own. If something breaks in one Container, it does not affect the others.

Another big plus is the Backups you can do. I back up every docker-compose + data for each container with Kopia. Since barely anything is installed in Linux directly, I can spin up a VM, restore my Backups withi Kopia and start all containers again to test my Backup strategy. Stuff just works. No fiddling with the Linux system itself adjusting tons of Config files, installing hundreds of packages to get all my services up and running again when I have a hardware failure.

I really started to love Docker, especially in my Homelab.

Oh, and you would think you have a big resource usage when everything is containerized? My 50 Containers right now consume less than 6 GB of RAM and I run stuff like Jellyfin, Pi-Hole, Homeassistant, Mosquitto, multiple Kopia instances, multiple Traefik Instances with Crowdsec, Logitech Mediaserver, Tandoor, Zabbix and a lot of other things.

It seems like docker would be heavy on resources since it installs & runs everything (mysql, nginx, etc.) numerous times (once for each container), instead of once globally. Is that wrong?

You would think so, yes. But to my surprise, my well over 60 Containers so far consume less than 7 GB of RAM, according to htop. Also, of course Containers can network and share services. For external access for example I run only one instance of traefik. Or one COTURN for Nextcloud and Synapse.

Hi, also used to be a sysadmin and I like things that are simple and work. I like Docker.

Besides what you already noticed (that most software can be found packaged for Docker) here are some other advantages:

- It’s much lighter on resources and efficient than virtual machines.

- It provides a way to automate installs (docker compose) that’s (much) easier to get started with than things like Ansible.

- It provides a clear separation between configuration, runtime, and persistent data and forces you to get organized.

- You can group related services.

- You can control interdependencies, privileges, shared access to resources etc.

- You can define simple or complex virtual networking topologies between containers as you like.

- It adds extra security (for whatever that’s worth to you).

A brief description of my own setup, for ideas, feel free to ask questions:

- Router running OpenWRT + server in a regular PC.

- Server is 32 MB of RAM (bit overkill for now, black Friday upgrade, ran with 4 GB for years), Intel CPU with embedded GPU, OS on M.2 SSD, 8 HDD bays in Linux software RAID (MD).

- OS is Debian stable barebones, only Docker, SSH and NFS are installed on the host directly. Tip: use whatever Linux distro you know and like best.

- Docker is installed from their own repository, not from Debian’s.

- Everything else runs from docker containers, including things like CUPS or Samba.

- I define all containers with compose, and map all persistent data to host storage. This way if I lose a container or even the whole OS I just re-provision from compose definitions and pick up right where I left off. In fact destroying and recreating containers cleanly is common practice with docker.

Learning docker and compose is not very hard esp. if you were on the job.

If you have specific requirements eg. storage, exposing services over internet etc. please ask.

Note: don’t start with Podman or rootless Docker, start with regular Docker. It will be 10x easier. You can transition to the others later if you want.

It seems like docker would be heavy on resources since it installs & runs everything (mysql, nginx, etc.) numerous times (once for each container), instead of once globally. Is that wrong?

There’s nothing stopping you from using a single instance of those and only adding databases and config. The configs that come with projects set them up individually because they need to offer full examples but those configs are only meant as a guideline.

Also keep in mind that the overhead of just running multiple instances isn’t very big. The resources are consumed when you start having connections and using CPU and storing data and so on, and those are going to be the same no matter how many instances you have.